More than two years after the start of one of the largest projects in which Univention has been involved to date, a new mail platform with over 30 million managed end users finally went online in late 2016. UCS takes care of the identity management duties for all the user accounts.

I first reported on the challenges of the project almost a year ago in the article How can OpenLDAP with UCS be scaled to over 30 million objects?. However, it is now no longer a “gray theory” – the project has now gone live and the LDAP has had to cope with the strain of thousands of accesses every second in real time ever since.

Today, I would like to provide you with an update and share with you some of our most important findings from the going live process.

Overview

The primary goal of the project was to migrate a large consumer mail platform on which approx. 31 million end users have access to their own account to an updated software environment. This project involves exchanging not only the front end, but the entire platform. The requisite steps are correspondingly extensive and operation should not be interrupted from the end users’ perspective.

As such, the project was launched back in 2014 when Univention took over the role of identity provider for the end user accounts. These accounts serve as a user directory for mail and groupware services based on Dovecot and Open-Xchange. Unsurprisingly for a system of such large dimensions, the environment is subject to a high volume of traffic: Millions of logins and thousands of changes need to be processed every hour.

Distribution of roles in the project

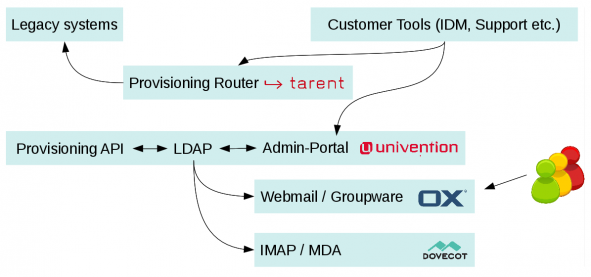

Univention, or more specifically UCS, takes on the role of the central storage location for the data concerning the end users (login, password, e-mail address, etc.) in the project. The data are called up by different systems (incl. OX and Dovecot) and maintained by completely different systems (“customer tools”) too. As such, it proved necessary to employ processes and APIs developed over the years, which were reimplemented in the scope of the project based on UCS’ standard interfaces (“provisioning API”). Developed by tarent, the “provisioning router”, which sends requests to the old system, UCS, or both based on the status of a mailbox, ensured as smooth as possible a transition between the existing environment (“legacy systems”)..

Structure of the IT environment

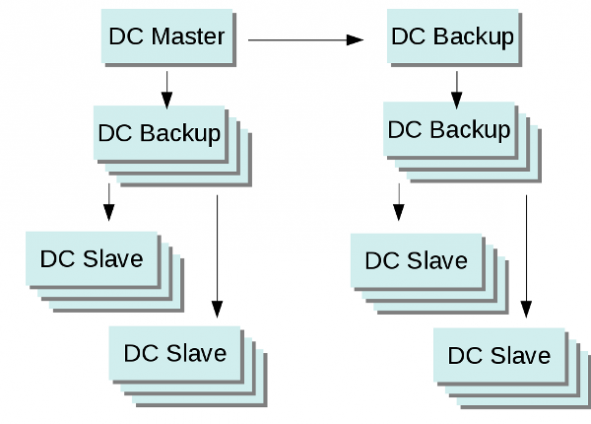

The entire operation of the environment is split between two primary computer centers. All the services for end users are active in both computer centers. In this project, the UCS LDAP replication was configured in such a way that as little traffic as possible develops between the computer centers, but as even as possible utilization of the LDAP servers is still ensured. In contrast to the standard configuration in the product, DC slave instances can thus only replicate from DC backup systems in the same computer center. This makes it possible to replicate thousands of changes per minute without noticeable delays even when there are more than 50 LDAP servers running.

The project-specific “provisioning API” is based on SOAP and converts incoming requests into queries to the Univention Directory Manager’s Python API. Improved distribution of loads is ensured by the use of RabbitMQ, which manages the processing of incoming changes and active notifications for third-party systems. The processes run on an in-house cluster of UCS member servers.

Project figures

The system was commissioned directly in full operation: As the LDAP serves as the source for many migration processes, all the data were imported from the existing system right at the start of the commissioning and all subsequent changes integrated continuously ever since. In this way, up to 400,000 changes are written in LDAP a day and up to 40,000 every hour.

The preparatory utilization tests revealed some very interesting limits:

Provisioning queries (SOAP):

The total system can currently process at least 70 queries a second. Scaling here is relatively simple via the number of instances in the provisioning cluster, i.e., the UCS member servers processing the SOAP requests. However, seems as the majority of LDAP accesses generated via provisioning queries are simple read operations, the DC slave systems used by the provisioning cluster are barely used to full capacity.

LDAP and replication:

As presented in detail in the last blog article, the bottleneck in the processing of LDAP requests is the I/O performance of the subjacent storage. In the case of write operations in particular, the standard configuration of OpenLDAP involves a direct dependency on the IOPs reached. In addition, special LDAP ACLs also became evident in the project. Poorly defined ACLs, in which the most commonly applied rules are not found until the end of the ACL definition, slow down the replication considerably.

Read accesses to the LDAP proved uncritical throughout the entire process of the project. During the project preparation, special consideration was given in all processes to employing as specific LDAP requests as possible, with search inquiries adapted to the configured indices and not delivering more than 10 results. The servers are thus in a position to process thousands of requests per second – the bottleneck in the performance tests was the processing speed of the LDAP clients.

LDAP BIND authentication:

One of the surprising bottlenecks in the project was encountered in a test of the maximum number of authentications the LDAP server could perform. This involved the use of the LDAP standard operation “LDAP BIND”, which performed an authentication with a clear text password in the scope of establishing a connection to the LDAP server. In OpenLDAP, however, new connections are handled in series in the core, with the result that the performance of this step is significantly dependent on the individual CDU core – in other words, not scaled with the standard multiprocessor systems. As such, even an average server system can still process around 300 authentications per second. This figure is more than sufficient for a couple of hundred thousand users, but a cluster of dozens of LDAP servers would be required for more than 30 million users. As such, we decided in this project that the authentication would be taken over by a separate service with access to the password hashes saved in LDAP.

Lessons learned

The most important findings from this project are those already mentioned in the first blog post:

A well-defined data structure in LDAP and coordinated LDAP requests from clients and indices on the server are fundamental design requirements for the success of the project. Together with a well-configured infrastructure comprising a hypervisor, network and storage, the main load, in other words the read accesses via IMAP, SMTP and webmail services for the end users, is non-critical.

If you have further questions about this project, feel free to contact us via our contact form or the below comment function!